Pricing verified April 30, 2026.

AI disclosure is the legal and technical practice of labeling synthetic media so viewers, platforms, and regulators can verify its origin. From August 2, 2026, the EU AI Act Article 50 requires machine-readable transparency markers on AI generated media. C2PA Content Credentials and Google SynthID are the two standards that meet that bar today. Non-compliance can reach EUR 15,000,000 in penalties.

This guide explains the regulatory stack, how C2PA works under the hood, which True Models ship provenance by default, how each major platform handles disclosure on upload, where the system breaks, and a 6-step playbook you can run before the August deadline.

Pricing and Legal Compliance Notice

Pricing last verified April 30, 2026. API rates are sourced from fal.ai; consumer subscription plans are from each product’s official site. Screenshots and UI references elsewhere in this article may reflect earlier versions. Prices change frequently - double-check with fal.ai or the vendor’s site before making spending decisions. Legal compliance information current as of April 30, 2026. Regulatory deadlines and penalties cited herein (particularly EU AI Act Article 50 August 2, 2026 effective date) are based on published official sources. Consult legal counsel regarding your specific jurisdiction’s compliance obligations.

5 Static Anchor Data Points (verified April 2026)

- EU AI Act Article 50 transparency obligations enter into force on August 2, 2026 (European Commission, Regulation 2024/1689).

- Maximum penalty for Article 50 violations is EUR 15,000,000 or 3% of worldwide annual turnover, whichever is higher.

- C2PA Specification v2.3 is the current published standard as of April 2026, with v2.4 in draft review (c2pa.org).

- TikTok reports applying AI labels to more than 1.3 billion videos since activating C2PA reading in May 2024 (TikTok Newsroom).

- AI Video Bootcamp benchmark across 15 True Models (April 2026): high-tier video APIs average USD 0.12 per second, image APIs average USD 0.04 per generation.

What AI Disclosure Actually Means in 2026

AI disclosure means marking AI generated content so it is identifiable as synthetic, either through a visible label, a machine-readable cryptographic manifest, or an invisible watermark. In 2026 it shifted from a best practice to a legal obligation for any creator whose content reaches EU residents or large US social platforms.

There are three disclosure layers a creator needs to understand.

The first is visible labels, such as a text overlay that reads “Made with AI” or a platform badge. These are required by TikTok and YouTube when an AI tool materially alters a realistic scene.

The second is C2PA Content Credentials, an open cryptographic standard for embedding signed provenance manifests directly into media files. C2PA is governed by the Coalition for Content Provenance and Authenticity, a joint initiative between Adobe, Microsoft, Intel, Truepic, the BBC, and others.

The third is invisible watermarks, like Google SynthID, which encode identifiable signals into pixel data so the content can be recognized as AI even after compression, screenshots, or format changes.

The EU AI Act treats C2PA and SynthID as two of the acceptable “state of the art” techniques for meeting Article 50 obligations. Most professional creators in 2026 need both layers, because C2PA survives when the file format is preserved, and SynthID survives when it is not.

For deeper context on how individual True Models like Veo 3.1, Seedance 2, and Nano Banana 2 handle provenance, see our full True Model benchmark.

The Regulatory Stack: Who Is Watching and What They Require

The EU AI Act is the primary global forcing function for AI disclosure. Article 50 activates August 2, 2026, with penalties up to EUR 15M. The US has no federal AI disclosure law, but a patchwork of state statutes, including California SB 926, Texas TRAIGA, and Tennessee’s ELVIS Act, create enforceable rules for election deepfakes, non-consensual intimate imagery, and voice cloning.

EU AI Act Article 50 in plain English

Article 50(2) of Regulation (EU) 2024/1689 requires providers of generative AI systems to ensure their outputs are marked in a machine-readable format and detectable as artificially generated.

Article 50(4) requires deployers of AI systems that generate deepfakes to disclose that the content has been artificially generated, with narrow exceptions for artistic, creative, or satirical works.

The obligations apply extraterritorially: a US creator whose AI generated content is viewed by EU residents falls under the rule. Enforcement sits with national market surveillance authorities and the new European AI Office.

Fines scale with the violation. Article 99 sets the maximum at EUR 15,000,000 or 3% of total worldwide annual turnover of the preceding financial year, whichever is higher, for non-compliance with transparency obligations.

US federal and state laws

The US approach is fragmented. There is no federal AI disclosure law for general synthetic media, but three categories of enforceable rules already exist.

| Jurisdiction | Statute | Scope | Effective |

|---|---|---|---|

| United States (federal) | TAKE IT DOWN Act (2025) | Non-consensual intimate imagery, including AI generated | Active 2025 |

| California | SB 926 | Criminalizes sexually explicit AI deepfakes | Active 2025 |

| California | AB 2655 / AB 2839 | Election-related deepfakes during campaign windows | Active 2024 |

| Texas | Texas Responsible AI Governance Act (TRAIGA) | High-risk AI systems, deceptive use | Phased 2026 |

| Tennessee | ELVIS Act | Voice and likeness protection (music focus) | Active 2024 |

| New York | Synthetic Performer Law | Disclosure of digital replicas in media | Active 2025 |

| European Union | AI Act Article 50 | All synthetic media, machine-readable marking | August 2, 2026 |

Creators working on election-adjacent content, celebrity likenesses, or adult material face the tightest exposure. Creators producing commercial brand work for EU audiences face the broadest exposure, because Article 50 has no small-creator carveout in its current text.

How C2PA Works: The Technical Infrastructure

C2PA embeds cryptographically signed provenance manifests into media files using the JUMBF container format. Each manifest contains assertions about the asset (who created it, how, with what tool), a claim that wraps those assertions with an ECDSA or EdDSA signature, and hard bindings that hash the binary payload so tampering is detectable.

JUMBF containers and manifest placement

C2PA metadata is stored in the JPEG Universal Metadata Box Format (JUMBF). In an MP4 video, the provenance data lives in a specific UUID box with the identifier D8FEC3D6-1B0E-483C-9297-5828877EC481, placed before the moov box and the first mdat box to avoid playback corruption. In a JPEG, the data sits in an APP11 marker. In PNG and SVG, similar standardized injection points apply.

A complete manifest includes the author, the claim generator (the tool or platform that produced the file), an ingredients list (any source files the asset was derived from), and a full edit history of actions taken on the file.

Cryptographic signatures

C2PA recommends elliptic curve cryptography on the P-256 or X25519 curves, using ES256 (ECDSA with SHA-256) or EdDSA (Ed25519) as the signature algorithm. Signing keys are issued by certificate authorities accepted into the C2PA trust list.

Hard bindings versus soft bindings

A hard binding is a SHA-256 hash of the file’s immutable binary payload. Because MP4 metadata headers shift during web delivery, C2PA uses exclusion ranges so the hash only covers the audio-visual payload, not the mutable header bytes.

A soft binding is a perceptual fingerprint that survives format changes, such as a SynthID watermark or a perceptual hash. Soft bindings are the only mechanism that can recover provenance after a social platform strips the embedded manifest, because they allow a verifier to look up the original manifest in a remote repository.

Leonard Rosenthol of Adobe, co-author of the C2PA specification, has publicly described the standard as providing “transparency, not authentication,” meaning it tells you what happened to a file, not whether the file is true.

The True Model Provenance Matrix (Original Research)

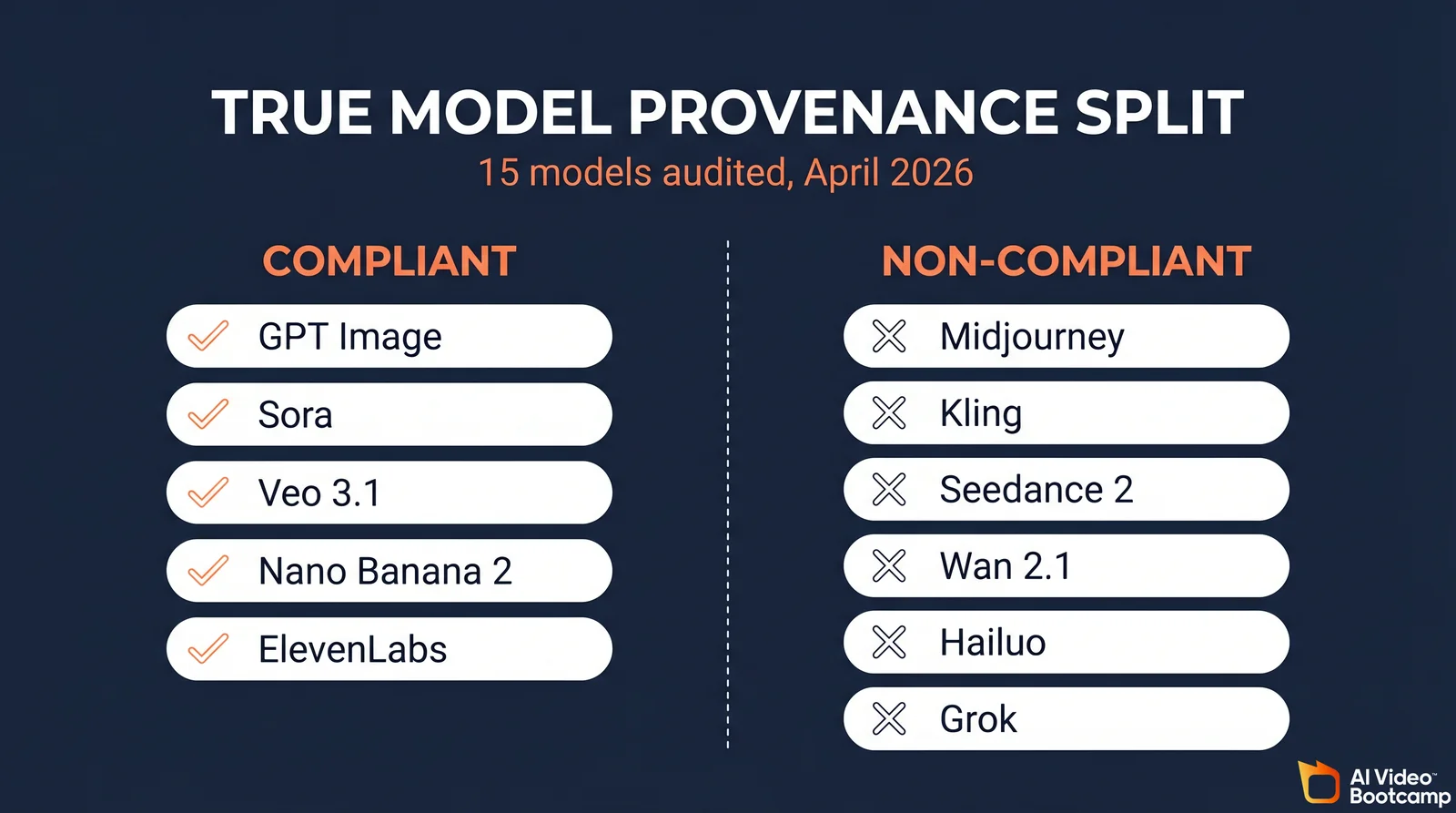

Of the 15 True Models benchmarked by AI Video Bootcamp, only five ship default cryptographic provenance: OpenAI’s GPT Image and Sora, Google’s Veo and Nano Banana, and ElevenLabs. Midjourney, Kling, Seedance, Wan, Hailuo, Grok, Recraft, and Ideogram either ship nothing mandatory or rely on proprietary internal tracking that does not meet EU AI Act machine-readable requirements by itself.

Proprietary True Model provenance matrix

This table is AI Video Bootcamp’s original audit of the 15 True Models across four disclosure dimensions. Cells marked “No public claim” mean the vendor has not published a C2PA or SynthID commitment in their official documentation as of April 2026.

| Model | C2PA by default | SynthID or equivalent watermark | C2PA Steering Committee member | Disclosure status |

|---|---|---|---|---|

| GPT Image (OpenAI) | Yes | No | Yes | Compliant |

| Sora (OpenAI) | Yes | No | Yes | Compliant |

| Veo 3.1 (Google) | Yes (manifests) | Yes (SynthID) | Yes | Compliant |

| Nano Banana 2 (Google) | Yes | Yes (SynthID) | Yes | Compliant |

| ElevenLabs | Yes (since Nov 2025) | Proprietary acoustic watermark | Yes | Compliant |

| Flux.2 Pro (Black Forest Labs) | Optional via deployer | invisible-watermark library | No | Partial, depends on deployer |

| LTX (LTX-2) | Internal tracking | No | No | Partial |

| Grok Imagine (xAI) | Embeds C2PA; often stripped | No | No | Partial |

| Midjourney | No | No | No | Non-compliant |

| Kling (Kuaishou) | No public claim | Visible watermark on free tier | No | Non-compliant |

| Seedance 2 (ByteDance) | No public claim | Internal TikTok watermark | No | Non-compliant |

| Seedream 4.5 / 5.0 (ByteDance) | No public claim | Internal TikTok watermark | No | Non-compliant |

| Wan 2.1 / 2.2 (Alibaba) | No (open weights) | No | No | Non-compliant |

| Hailuo (MiniMax) | No public claim | Proprietary safety review | No | Non-compliant |

| Recraft V3 | No public claim | Proprietary tracking | No | Non-compliant |

| Ideogram V3 | Not advertised | Internal safety filters | No | Non-compliant |

How to read this table. “Compliant” means the model ships a disclosure signal that can satisfy EU AI Act Article 50(2) without extra developer work. “Partial” means the signal is available but only when the deployer enables it. “Non-compliant” means there is no published mechanism and the creator carries the full disclosure burden.

ByteDance and Kling are flagged as non-compliant not because they ignore safety, but because their published frameworks rely on internal TikTok or Kling infrastructure rather than the open C2PA standard that EU regulators expect.

For more on which models are worth paying for in 2026, see our Veo 3 vs Seedance vs Kling benchmark.

Platform Disclosure Rules: Where Your Content Lands

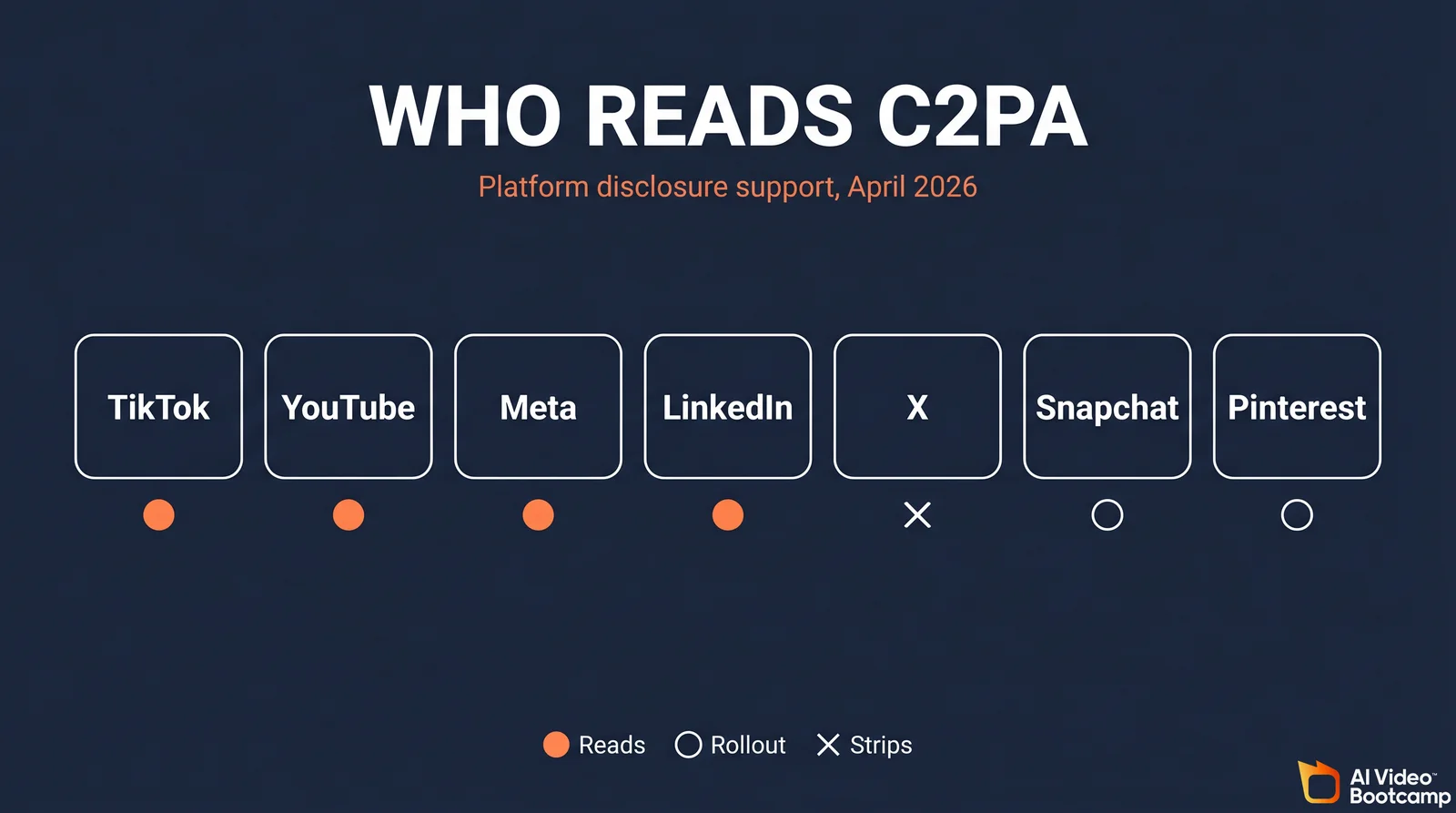

Major platforms diverge sharply on AI disclosure. TikTok and YouTube require creator disclosure and auto-label from C2PA. Meta uses “AI Info” tags. X does not enforce disclosure and strips provenance on upload. LinkedIn reads Content Credentials. Snapchat and Pinterest are in rollout. Relying only on file-level C2PA is insufficient; platform native labels are a second line of defense.

Platform comparison table

| Platform | Reads C2PA | Auto-labels from manifest | Creator disclosure required | Strips metadata on upload |

|---|---|---|---|---|

| TikTok | Yes (since May 2024) | Yes | Yes, for realistic AI content | Partial, C2PA preserved for verified sources |

| YouTube | Yes | Yes | Yes, mandatory checkbox | Yes, most metadata stripped |

| Meta (Facebook, Instagram) | Yes | Yes, “AI Info” label | Yes, for realistic AI | Yes |

| Yes | Yes, “Content Credentials” badge | Recommended | Partial | |

| X (Twitter) | No enforcement | No | No mandate | Yes, aggressive stripping |

| Snapchat | In rollout | In rollout | Recommended | Yes |

| In rollout | In rollout | Not mandated | Yes |

What this means in practice

If a creator uploads a Nano Banana 2 image with a full C2PA manifest to X, the platform strips the JUMBF box, the manifest is lost, and the image appears unlabeled. The only remaining signal is Google’s invisible SynthID watermark, which X does not currently read.

If the same creator uploads the same image to TikTok or LinkedIn, the platform reads the C2PA manifest, displays a badge, and the disclosure chain holds.

This is the single most important operational fact in AI disclosure right now: your compliance posture depends on the destination platform, not just on the source model.

Pricing and Economics of the True Models

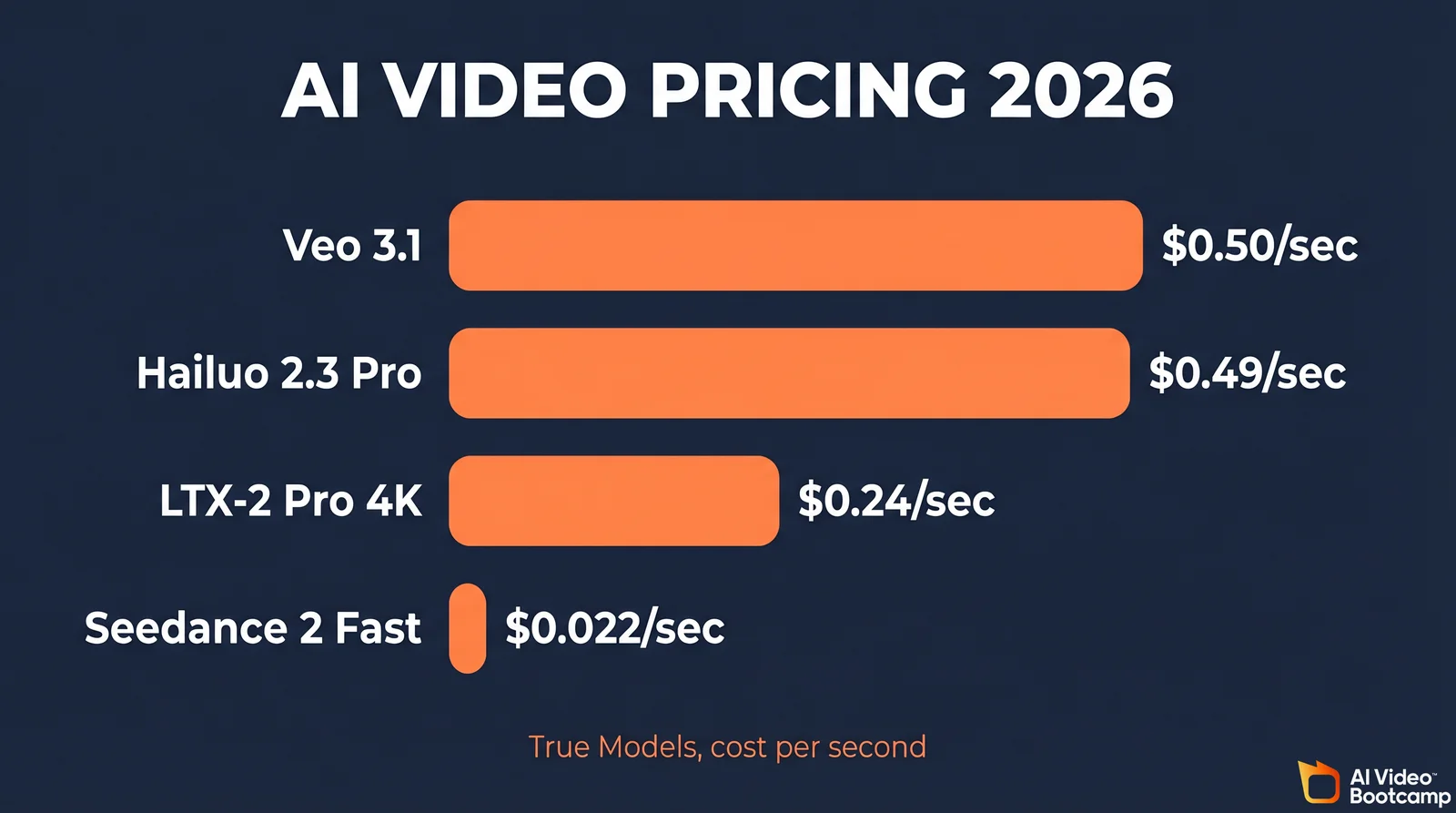

AI Video Bootcamp’s April 2026 benchmark finds high-tier video APIs averaging USD 0.12 per second and image APIs clustering at USD 0.04 per generation. The most expensive True Model (Veo 3.1 at roughly USD 0.50 per second) is about 23 times more costly than the cheapest (Seedance 2.0 Fast at USD 0.022 per second).

Video generation pricing

| Model | Service tier | Resolution | Cost per second |

|---|---|---|---|

| Veo 3.1 (Google) | Standard | 1080p | ~USD 0.50 |

| LTX-2 Pro | Pro | 4K UHD | USD 0.24 |

| Seedance 2.0 Pro | Production | Standard | USD 0.247 |

| LTX-2 Fast | Fast | 1080p | USD 0.04 |

| Hailuo 2.3 Pro (MiniMax) | High Resolution | Standard | USD 0.49 |

| Hailuo 2.3 Standard | Standard | Standard | USD 0.28 |

| Seedance 2.0 Fast | Budget | Standard | USD 0.022 |

| Kling Pro | Pro Mode (5s) | 1080p | ~USD 0.20 (credit equivalent) |

| Wan 2.1 1080p | High Quality | 1080p | ~USD 1.00 per second (starter credit pricing) |

Image generation pricing

| Model | Resolution | Cost per image |

|---|---|---|

| Nano Banana 2 (4K) | 4K | USD 0.09 |

| Nano Banana 2 (1K) | 1K | USD 0.04 |

| Nano Banana Edit | Image-to-image | USD 0.02 |

| Recraft V3 Raster | 1K | USD 0.04 |

| Recraft V3 Vector | Vector | USD 0.08 |

| Ideogram V3 Turbo | Standard | USD 0.03 |

| Ideogram V3 Quality + Character | Premium | USD 0.20 |

| Flux.2 Pro (first MP) | Per megapixel | USD 0.03 |

| Seedream 4.5 (BytePlus) | Per image | USD 0.040 |

Audio generation pricing (ElevenLabs)

ElevenLabs bills per 1,000 characters and hard-caps audio quality below the Pro tier.

| Plan | Monthly cost | Included characters | Quality cap |

|---|---|---|---|

| Free | USD 0 | 10,000 | 128 kbps |

| Starter | USD 5 | 30,000 | 128 kbps |

| Creator | USD 22 | 100,000 | 128 kbps |

| Pro | USD 99 | 500,000 | 192 kbps / PCM |

| Scale | USD 330 | 2,000,000 | 192 kbps / PCM |

| Business | USD 1,320 | Custom | 192 kbps / PCM |

The 128 kbps cap on Creator is a hidden trap for audiobook producers; a typical full-length audiobook runs around 480,000 characters and requires the Pro tier for acceptable fidelity.

Where C2PA Breaks: Distribution Failures

The weakest link in AI disclosure is the upload pipeline. Most social platforms compress images and videos on ingest and strip EXIF, XMP, and JUMBF data to save storage. Even a fully compliant file from Nano Banana or Seedance lands on X or Facebook with provenance wiped. Soft bindings like SynthID are the only recovery mechanism today, and most platforms do not read them.

Analysis of the r/ArtificialIntelligence, r/photojournalism, and r/StableDiffusion communities shows persistent skepticism about whether C2PA can survive real distribution. The recurring complaints are predictable. Platform ingest pipelines strip metadata. Screenshots erase everything. Screen recordings re-encode the audio track and break acoustic watermarks. Third-party re-hosts (Imgur, Reddit image servers, Telegram) do not preserve manifests.

Dr. Hany Farid, UC Berkeley professor of digital forensics, has repeatedly argued in public conference talks and academic work that provenance-by-file-metadata cannot carry the burden alone, and that recovery-side soft bindings plus platform-level detection are the real backbone of any durable disclosure regime.

For creators, the practical consequence is that the initial upload is the only reliable disclosure layer. Everything downstream is best-effort.

6-Step AI Disclosure Compliance Playbook

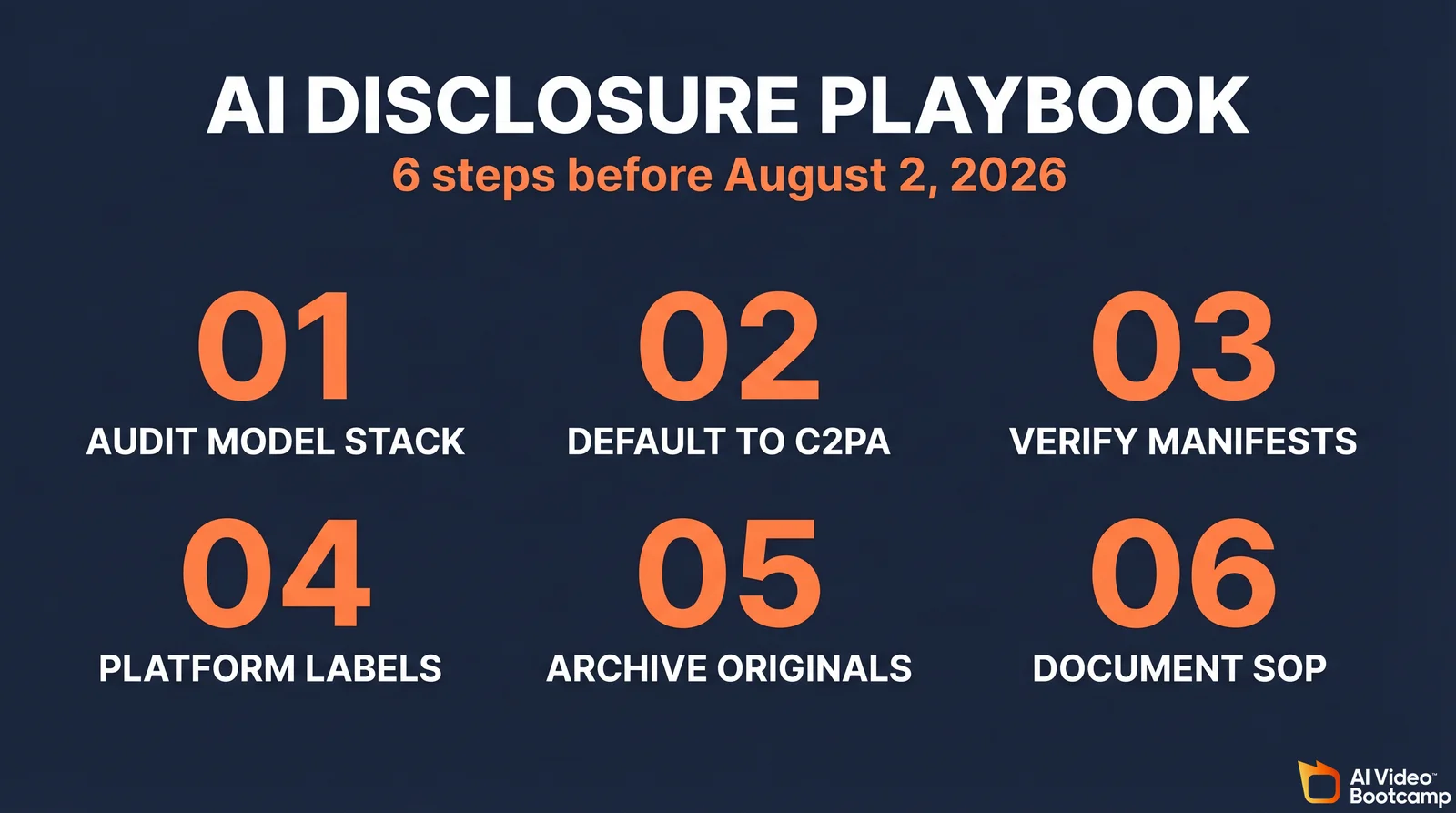

Before August 2, 2026, every AI video creator should audit their model stack, pick C2PA-capable models where possible, validate manifests before upload, use platform native AI labels, archive originals with manifests intact, and document the workflow so legal counsel can review it if needed.

Step 1: Audit your model stack

List every model in your pipeline. For each, record whether it ships default C2PA, default SynthID, optional C2PA, or nothing. Use the True Model Provenance Matrix above as the starting point. Flag any Midjourney, Kling, or Wan outputs as “manual disclosure required.”

Step 2: Default to C2PA-capable models for EU-facing work

For content that will reach EU audiences, make OpenAI (GPT Image, Sora), Google (Veo 3.1, Nano Banana 2), and ElevenLabs your default. Reserve non-compliant models for personal, internal, or non-EU projects, or wrap their outputs with a manual disclosure layer.

Step 3: Validate manifests before publishing

Run outputs through a C2PA viewer such as contentcredentials.org/verify or the Adobe Content Credentials tool. Confirm the manifest is intact, the signature is valid, and the claim generator matches the model you used.

Step 4: Use platform native AI labels

On TikTok, check “AI-generated content” in the post flow. On YouTube, check the “Altered content” box. On Meta, add the “AI Info” tag. On LinkedIn, rely on Content Credentials auto-detection. Do this in addition to C2PA, not instead of.

Step 5: Archive originals with manifests

Keep the unedited, unuploaded original file with its full C2PA manifest in cold storage. If a platform later raises a question or a regulator asks for evidence, the archived original is the proof of compliant origin.

Step 6: Document the workflow for legal review

Write a one-page SOP for your team that covers model choice, manifest verification, platform labeling, and archival. This is what a market surveillance authority will ask to see if they open a file.

Expert Perspectives

Andrew Jenks, Chair of the C2PA Steering Committee (Microsoft), has consistently described the standard as a transparency framework that empowers creators to show what they made and how, rather than a black-box authentication system.

Leonard Rosenthol, co-author of the C2PA specification and Chief Architect of Content Credentials at Adobe, has argued publicly that manifests are tamper-evident, not tamper-proof, and that soft bindings plus platform cooperation are essential to closing the loop.

Dr. Hany Farid, professor at UC Berkeley’s School of Information and a leading voice on digital forensics, has warned that metadata stripping at the platform layer is the single largest gap in the current disclosure regime and that watermarking will carry most of the verifiability load in practice.

FAQ

When does the EU AI Act require AI disclosure?

The EU AI Act Article 50 transparency obligations enter into force on August 2, 2026. Providers of generative AI systems must mark outputs in a machine-readable format, and deployers creating deepfakes must disclose that the content is artificially generated.

Does C2PA actually survive upload to TikTok, Instagram, or X?

It depends on the platform. TikTok, YouTube, LinkedIn, and Meta platforms preserve or read C2PA manifests and auto-apply AI labels. X does not currently read C2PA and aggressively strips metadata on upload. SynthID is the only signal that survives on platforms that strip JUMBF boxes.

Is Midjourney compliant with the EU AI Act?

As of April 2026, Midjourney does not ship C2PA manifests or SynthID-equivalent watermarks by default, making it the most exposed of the 15 True Models for EU AI Act Article 50 compliance. Creators using Midjourney for EU audiences must apply manual disclosure on every upload.

Does SynthID survive screenshots or re-encoding?

SynthID is designed to survive moderate compression, format changes, and re-encoding, which is its core advantage over C2PA hard bindings. It can degrade under extreme cropping, heavy filtering, or low-quality screen recordings, so it is not a perfect signal.

Do I need both C2PA and SynthID, or is one enough?

For professional work aimed at EU audiences, use both where available. C2PA provides detailed, human-readable provenance when the file format is preserved. SynthID recovers the disclosure signal after platforms strip metadata. Google’s Veo 3.1 and Nano Banana 2 ship both by default, which is why they are the safest default choice today.

Sources

Regulatory. European Commission, Regulation (EU) 2024/1689 (AI Act). White House, TAKE IT DOWN Act signing statement. California Legislative Information, SB 926. Tennessee ELVIS Act, Public Chapter 588.

Technical. [C2PA Specification v2.3](https://spec.