Pricing verified April 30, 2026.

If you are writing a check to an AI video API in 2026, three names belong on the short list: Seedance 2.0 from ByteDance, Kling 3.0 from Kuaishou, and Sora 2 from OpenAI. They are the three production-grade APIs that real agencies, brand teams, and studios are actually routing traffic to this quarter.

This is not a spec sheet. It is a working developer guide: what each model does differently at the architecture layer, what it costs per second of output, and which constraint should push you toward which API. If you are new to this space and want the friendlier version first, start with our Seedance 2.0 vs Kling 3.0 vs Veo 3.1 comparison and then come back here.

Pricing last verified April 30, 2026. API rates sourced from fal.ai for Seedance and Kling; Sora 2 rates from OpenAI’s official API documentation. Model aggregators (fal.ai, Replicate, OpenRouter) mark up some rates by 5 to 15 percent. Screenshots and UI references elsewhere in this article may reflect earlier versions. Prices change frequently, verify with fal.ai or the vendor before making spending decisions.

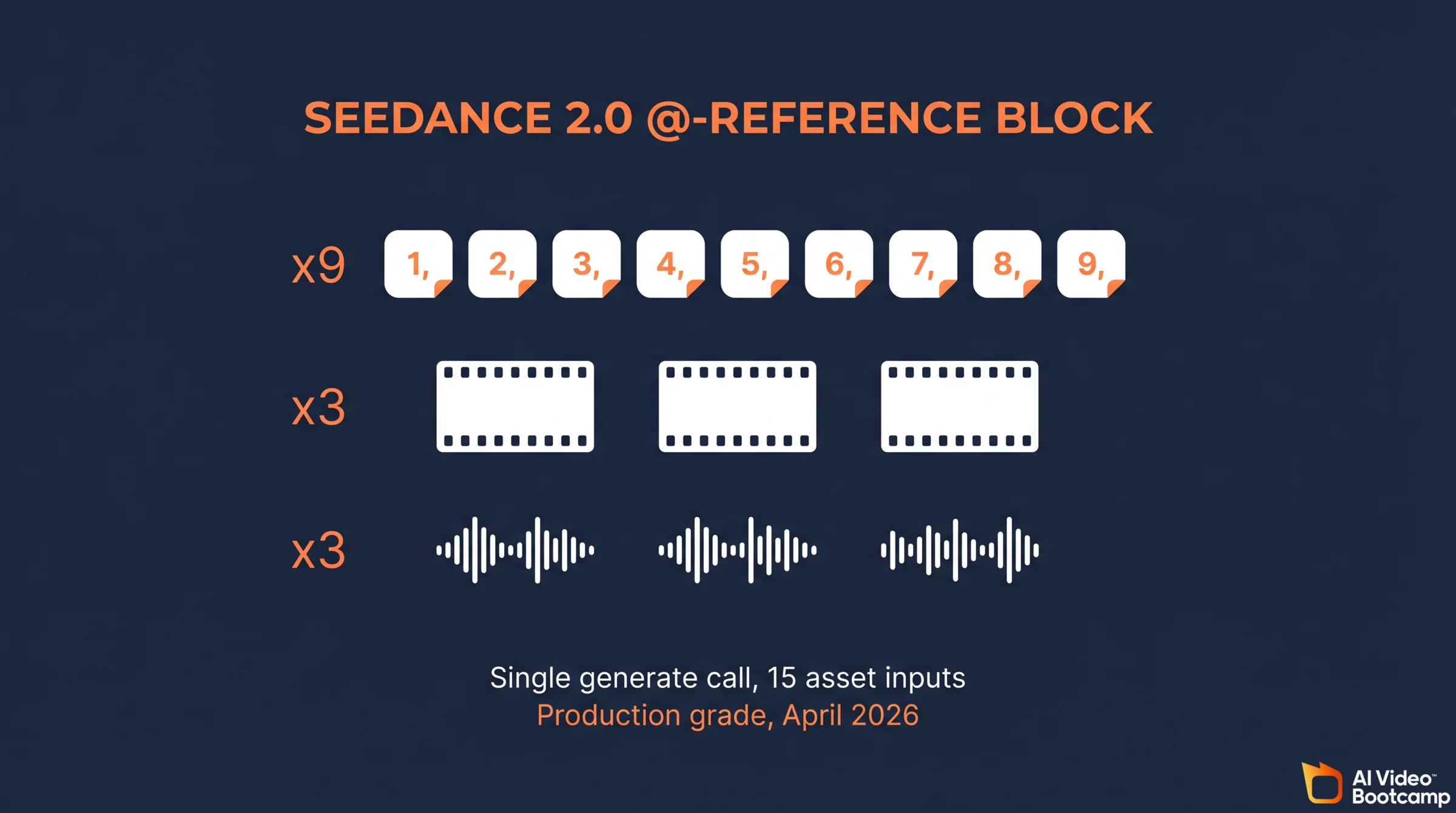

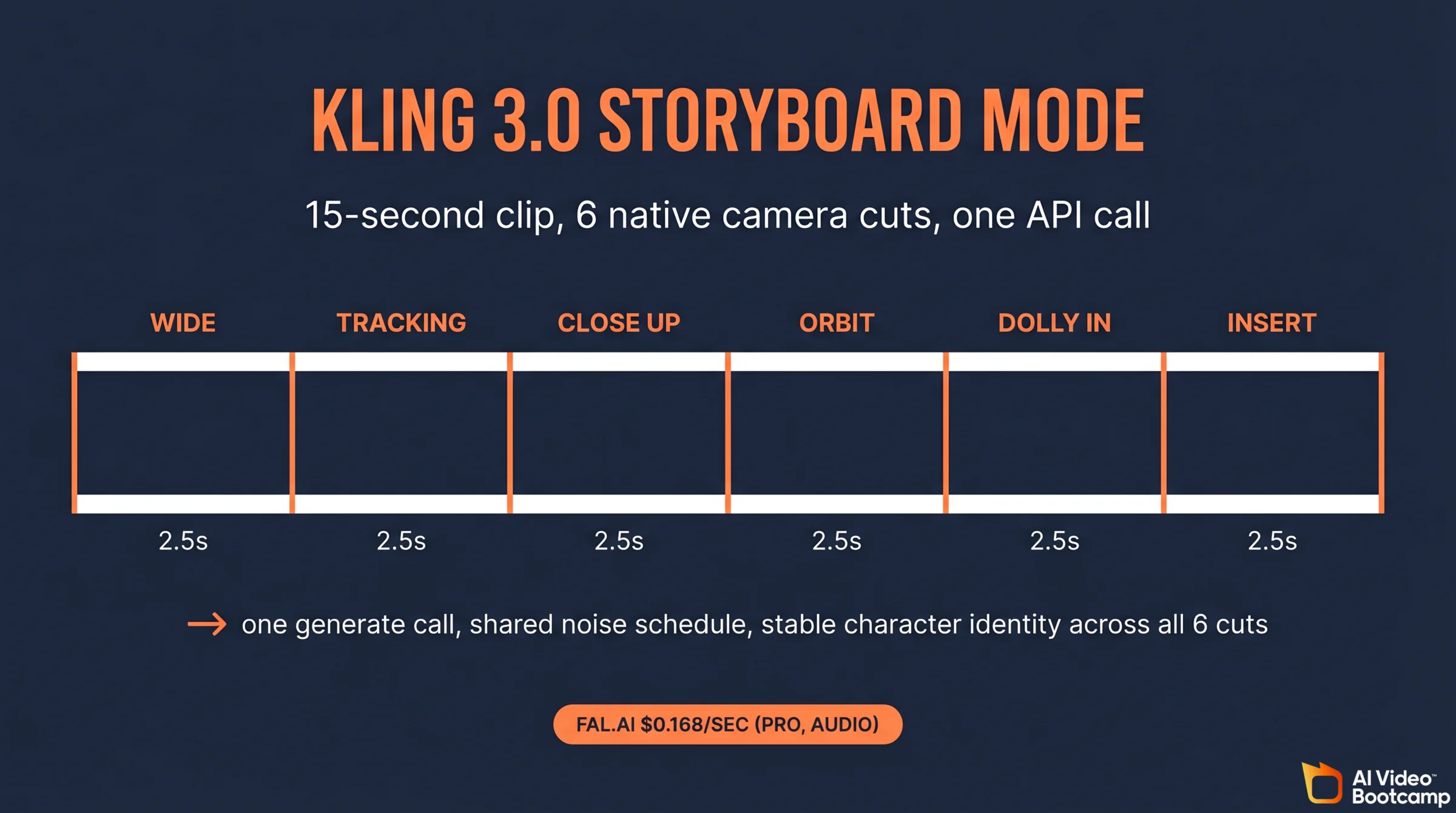

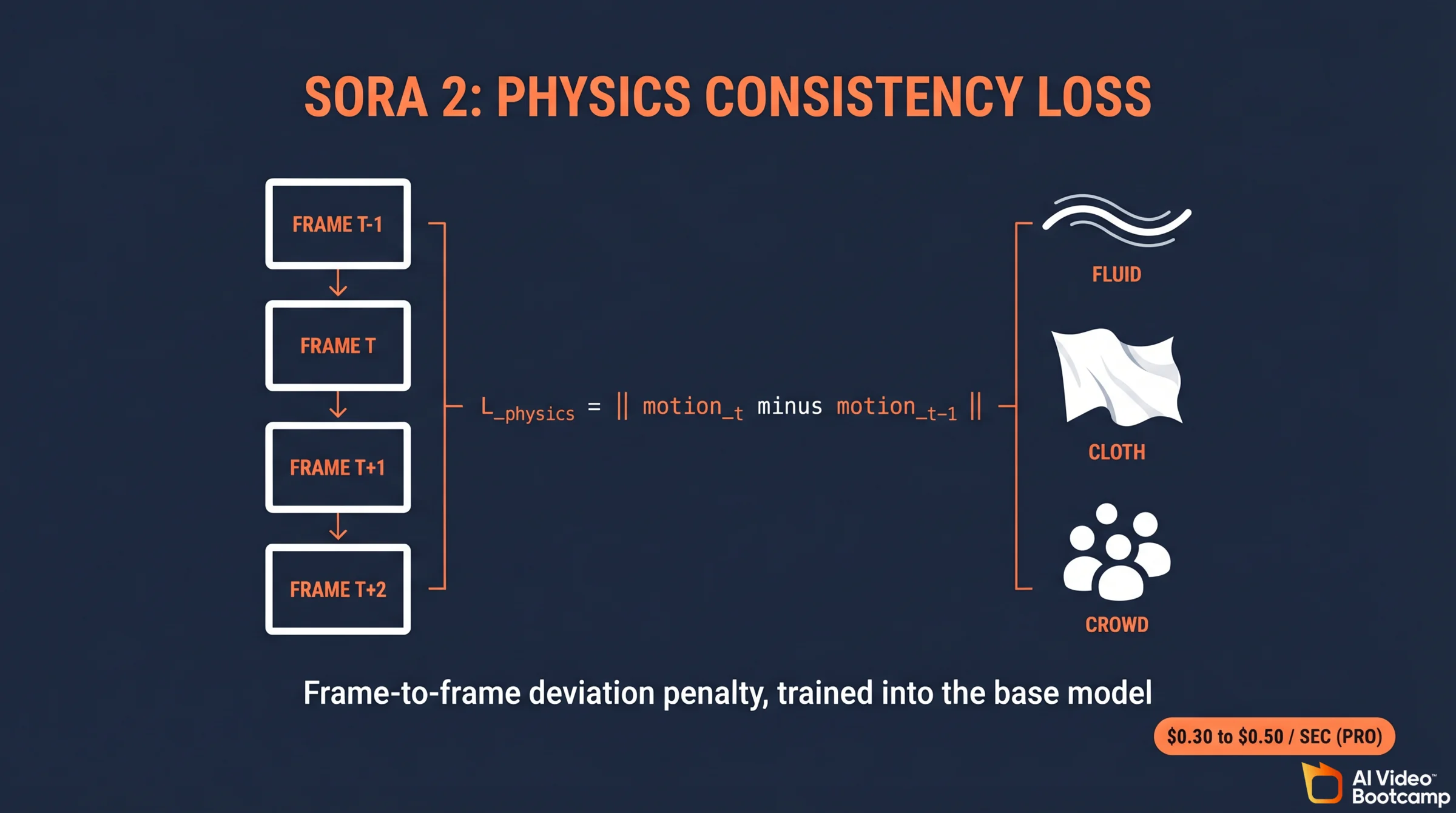

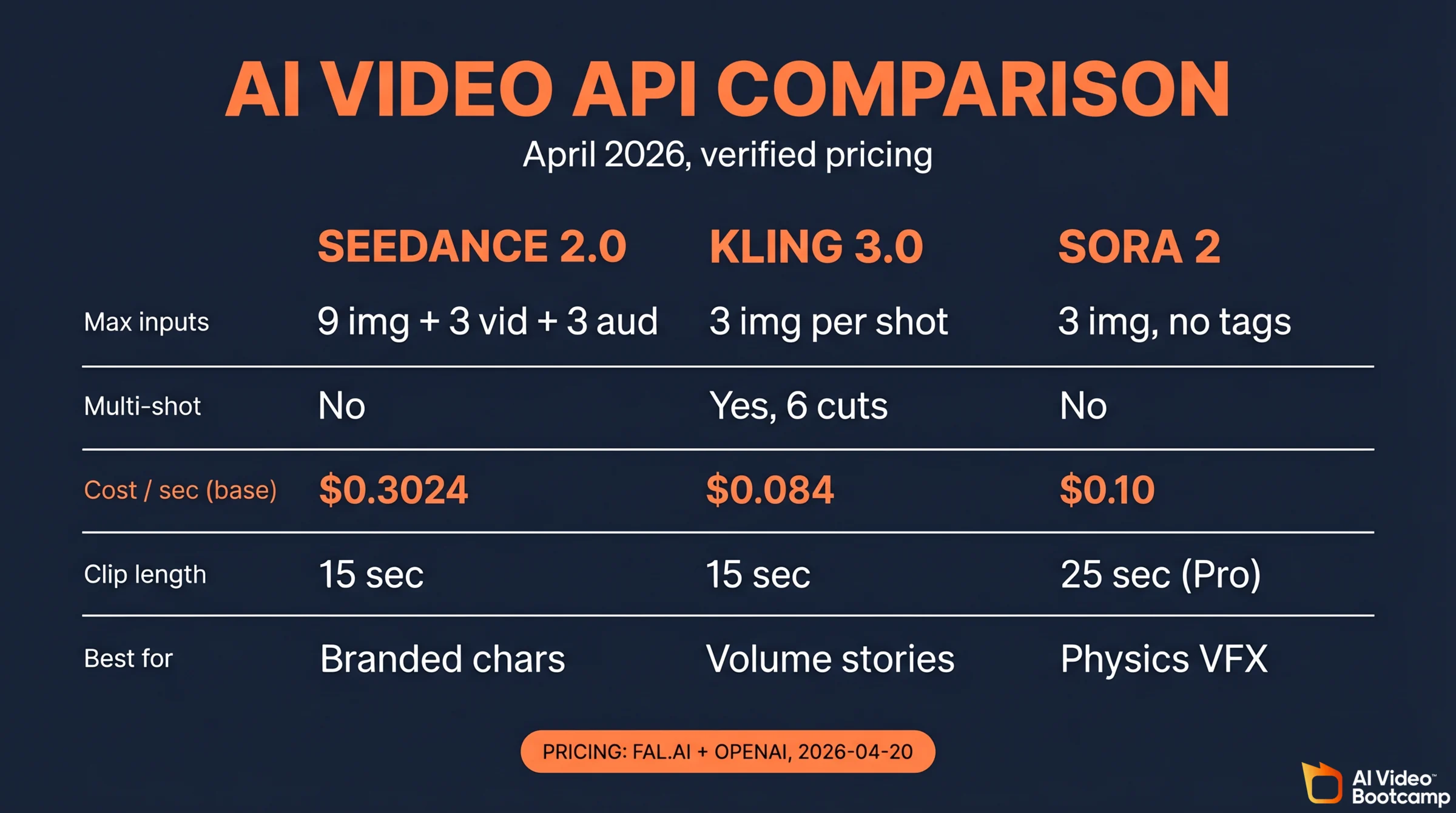

Seedance 2.0 leads on multimodal conditioning: its @-reference block accepts up to 9 images plus 3 video clips plus 3 audio tracks in a single generate call at roughly $0.30 per second for 720p via fal.ai. Kling 3.0 wins on structured narrative, generating 15 second clips with up to 6 native camera cuts at $0.084 to $0.196 per second depending on tier and audio. Sora 2 leads on physics fidelity and temporal consistency but runs $0.10 to $0.50 per second and trails Seedance on character identity retention. Most 2026 stacks use all three.

Five Facts to Know Up Front

- Seedance 2.0’s

@-referencesystem accepts up to 9 images, 3 video clips, and 3 audio tracks per call, the widest multimodal fan-in of any production video API in April 2026. - Kling 3.0’s storyboard mode generates up to 6 camera cuts natively inside a single 15 second clip, removing the post-stitch step from commercial workflows.

- Seedance 2.0 standard tier on fal.ai costs $0.3024 per second for 720p output, with a Fast 480p tier at $0.15 per second when a video reference is supplied (fal.ai Seedance pricing).

- Sora 2’s cost ceiling reaches $0.50 per generated second on OpenAI’s premium long-form tier, roughly 1.7x Seedance 2.0 standard and 2.5x Kling 3.0 Pro at the audio tier.

- Kling 3.0 Pro with audio costs $0.168 per second on fal.ai, the lowest sticker price among the three for generated output that includes native dialogue and ambient sound.

Seedance 2.0: Multimodal @-Reference and Dual-Branch Architecture

What the @-reference system actually does

Seedance 2.0’s defining API feature is the @-reference block. In a single generate call, a developer can attach:

- Up to 9 images tagged as

@character,@product,@style, or@scene. Each tag maps to a role-specific adapter inside the model, not a generic bag-of-images embedding. Order matters: community testing on r/aivideo shows that the same three reference images passed in different sequence produce measurably different outputs. The reliable pattern is character references first, product or style references second, motion references last. - Up to 3 video clips as motion or camera references. The model extracts per-frame optical flow and camera trajectories rather than pixel content, so you borrow motion without leaking the source footage.

- Up to 3 audio tracks for lip-sync targets, ambient sound beds, or voiceover pacing. Phoneme-to-mouth alignment happens inside the denoising schedule, not as a post-process, which is why Seedance’s lip sync holds up on dialogue-heavy clips.

That composition surface is the reason ad agency workflows skew toward Seedance. A creative director can pass a talent lookbook, a reference commercial, and a locked voiceover into one call and get a production-ready clip back.

Under the hood, ByteDance’s technical report describes Seedance 2.0 as a Dual-Branch Diffusion Transformer that processes spatiotemporal tokens in one branch and audio waveform tokens in a parallel branch, joining them with cross-attention at every transformer block. That parallel design is why adding audio references does not cost you meaningful quality on the visual branch, a trade-off that shows up clearly in Kling’s pre-3.0 releases.

Pricing on fal.ai

| Seedance 2.0 tier | Resolution | Cost per second (fal.ai) | Notes |

|---|---|---|---|

| Standard | 720p | $0.3024 | Token-based under the hood: $0.014 per 1000 tokens |

| Fast | 480p | $0.15 | Multimodal with video input reduces cost |

| Seedance 1.0 Lite | 480p | $0.06 | Deprecated, Seedance 2.0 recommended |

A 1 minute 720p clip on Standard runs about $18.14 in API cost alone, before you factor audio, retries, and failed generations. A 15 second Fast clip with a video reference comes in around $2.25, which is the sweet spot for high-volume social content.

Community pulse

On r/aivideo, Seedance 2.0 is praised for physics chaos (dense crowds, rapid limb interaction, unstable object stacks) and criticized for two things: the credit-to-API pricing gap between ByteDance’s consumer Dreamina tier and the enterprise API creates sticker shock when teams migrate, and the @-reference system has undocumented ordering effects. Most production teams now pin a prompt template that fixes reference order.

Kling 3.0: Multi-Shot Storyboarding and 4K Pricing

Storyboard mode, technically

Kling 3.0 Pro’s storyboard endpoint is not a post-hoc stitcher. It treats a 15 second clip as a single generation with up to 6 internal cut boundaries, each carrying its own camera prompt and shot type. At inference time, the model reuses the shared noise schedule across all shots, which keeps character identity stable across cuts better than a naive concatenate-and-generate approach.

Supported shot types inside one storyboard call include:

wide,medium,close_up,extreme_close_updolly_in,dolly_out,tracking,orbit,craneover_the_shoulder,pov,insert

Cut timing is specified as an ordered array of {shot_type, duration_seconds, prompt} objects. Durations must sum to the clip length (5, 10, or 15 seconds). The deeper dive on Kling specifically lives in our Kling AI complete guide, which includes the full prompt template we use internally.

Kuaishou’s research team describes the backbone as a Multi-Modal Visual Language (MVL) framework paired with what they call a Visual Drift Killer, a training-time consistency loss that penalizes identity drift across shot boundaries. The Visual Drift Killer is the technical reason storyboard mode works as a single generation instead of six stitched clips.

Pricing on fal.ai

| Kling 3.0 tier | Audio | Cost per second (fal.ai) | Notes |

|---|---|---|---|

| Pro 1080p | No audio | $0.112 | Image-to-video endpoint |

| Pro 1080p | Audio | $0.168 | Native audio generation |

| Pro 1080p | Audio + voice control | $0.196 | Premium control surface |

| Standard 720p | No audio | $0.084 | Value tier |

| Standard 720p | Audio | $0.126 | Audio add-on |

| Standard 720p | Audio + voice control | $0.154 | Mid tier |

A 15 second clip on Pro with audio runs $2.52. A 15 second clip on Standard without audio runs $1.26. At the agency volume tier (thousands of clips per month), Standard without audio is the default and audio is layered in via ElevenLabs or the native Kling audio pass only on hero shots.

Consumer plans vs API

If you are prototyping and not yet ready to commit to per-second API economics, Kling’s consumer plans on kling.ai are worth a look: Standard at $6.99 per month, Pro at $25.99 per month, and Premier at $64.99 per month. These plans bundle a credit pool and lower-priority queues rather than metered API access, which is fine for experimentation and wrong for production.

Community pulse

r/aivideo threads consistently note three Kling 3.0 strengths: storyboard mode (especially for product demo and explainer ads), camera move adherence (orbit and crane shots render more reliably than on Seedance), and the price point for agencies burning thousands of clips per month. Common complaints: lip sync degrades on non-English speech, and the 4K mode adds 20 to 30 percent latency versus 1080p.

Sora 2: Physics, Temporal Consistency, and the Cost Ceiling

Where Sora 2 wins

Sora 2 remains the benchmark for physics simulation and temporal consistency on long takes. On tasks involving fluid dynamics, rigid-body collisions, cloth simulation, and articulated multi-limb motion, Sora 2 produces fewer warping artifacts than Seedance 2.0 over clips longer than 5 seconds. The underlying reason, per OpenAI’s Sora 2 system card, is a dedicated physics consistency loss applied during training, which penalizes frame-to-frame deviations from learned motion manifolds.

Temporal consistency on subjects (same character across a 12 second clip, same lighting across a camera move) is also where Sora 2 edges its peers. Developers building narrative content report that Sora 2 needs fewer regenerations per approved clip, which partly offsets the higher unit cost.

Where Sora 2 loses

Two trade-offs show up repeatedly in developer feedback:

- Character fidelity. Seedance 2.0’s

@-referencesystem beats Sora 2 when the brief is “make this exact character, in this exact outfit, do this action.” Sora 2’s reference upload supports fewer images per request and does not expose role tagging, so identity drift is more common on complex character briefs. Internal benchmarks from production teams show Sora 2 Storyboard mode hitting around 72 percent character similarity versus Seedance’s 91 percent on the same multi-shot brief. - Cost ceiling. Sora 2’s Pro long-form tier runs up to $0.50 per generated second. That is roughly 1.7x Seedance 2.0 Standard and 3x Kling 3.0 Pro. High-volume workloads (ads, social, product demos) do not pick Sora 2 as the default on unit economics alone.

Pricing (OpenAI direct)

Sora 2 is not currently carried on fal.ai. The rates below come from OpenAI’s developer documentation for the Sora API.

| Sora 2 tier | Resolution | Cost per second | Notes |

|---|---|---|---|

| sora-2 Standard | 720p | $0.10 | Base tier |

| sora-2-pro | 1080p | $0.30 | Pro tier |

| sora-2-pro Long-Form | 1080p, up to 25s | $0.50 | Premium cinematic tier |

A 25 second premium Sora clip runs $12.50, roughly the cost of a 40 second Seedance Standard clip or a 75 second Kling Standard clip without audio.

Workarounds that help

Two patterns consistently improve Sora 2 output in production:

- JSON-structured prompts. Instead of a paragraph, pass Sora an object with

subject,action,camera,lighting,duration,style, andnegativefields. The model parses structured prompts more reliably than narrative prose, which reduces the iteration loop. Our AI video prompting guide covers the full template. - Negative parameters. Sora 2 accepts a

negative_promptfield that materially reduces common artifacts (floating limbs, duplicated fingers, text hallucinations on signage). Agencies standardize a shared negative prompt library across projects.

Head-to-Head Technical Comparison

This is the table we keep pinned internally when routing jobs across the three APIs.

| Feature | Seedance 2.0 | Kling 3.0 Pro | Sora 2 |

|---|---|---|---|

| Developer | ByteDance | Kuaishou | OpenAI |

| Max native resolution | 720p (standard) | 1080p, upscaled 4K | 1080p |

| Max frame rate | 30 fps | 60 fps (4K tier) | 30 fps |

| Max clip duration | 15 seconds | 15 seconds | 25 seconds (Pro Long-Form) |

| Multimodal reference inputs | 9 images + 3 videos + 3 audio | Up to 3 images per shot, storyboard-scoped | Up to 3 images, no role tags |

| Multi-shot in one call | No, single shot | Yes, up to 6 cuts | No, single shot |

| Native audio generation | Yes | Yes (English strongest) | Yes |

| Physics fidelity | High on chaos scenes | Moderate | Best in class |

| Temporal consistency | Very good, drifts past 10s | Very good across cuts | Best in class |

| Character identity (complex briefs) | Best (role-tagged refs) | Good | Moderate |

| Cost per second (base, fal.ai or OpenAI) | $0.3024 | $0.084 (no audio, Standard) | $0.10 |

| Cost per second (cheapest production tier) | $0.15 (Fast 480p + video ref) | $0.084 (Standard no audio) | $0.10 |

| Cost per second (top tier) | $0.3024 | $0.196 (Pro audio + voice) | $0.50 (Pro Long-Form) |

| API availability | Live (fal.ai) | Live (fal.ai) | Live (OpenAI direct) |

| Best-fit workflow | Branded character ads | E-commerce, explainers, multi-cut | Cinematic narrative, physics-heavy VFX |

Decision Matrix for Developers

Pick the model that matches your primary constraint, not the average case.

| If your top constraint is… | Pick | Why |

|---|---|---|

| Volume at low cost per second | Kling 3.0 Standard | $0.084 per second without audio is the best production unit economics in the top tier |

| Exact character identity across scenes | Seedance 2.0 | @-reference role tags (@character, @product) beat every other model on identity lock |

| Physics realism (water, cloth, crowd) | Sora 2 Pro | Dedicated physics consistency loss, measurably fewer regenerations |

| Multi-shot narrative in one call | Kling 3.0 Pro | Native storyboard mode with up to 6 cuts inside a single 15 second clip |

| Multi-input prompts (images + footage + audio) | Seedance 2.0 | 9 images, 3 clips, 3 audio tracks per call, no other model matches |

| Clips longer than 15 seconds | Sora 2 | Pro Long-Form supports up to 25 seconds |

| Premium cinematic look, budget not a constraint | Sora 2 Pro | Best temporal consistency and physics, accepts the $0.30 to $0.50 per second cost |

| Native audio at the lowest cost | Kling 3.0 Pro | $0.168 per second with audio, cheaper than Sora 2 Standard without audio |

Quick recipe if you are forced to pick only one

- Agency shipping 1,000+ clips per month: Kling 3.0 Standard without audio, layered audio on approved shots. Cost discipline compounds fast.

- Brand team with a locked character cast: Seedance 2.0. Identity retention is the bottleneck, and the

@-referencesystem is built for exactly this problem. - Film or VFX studio on a single prestige project: Sora 2 Pro Long-Form. The physics gap is real and the per-second cost washes out on a one-off.

The 2026 Three-Model Production Stack

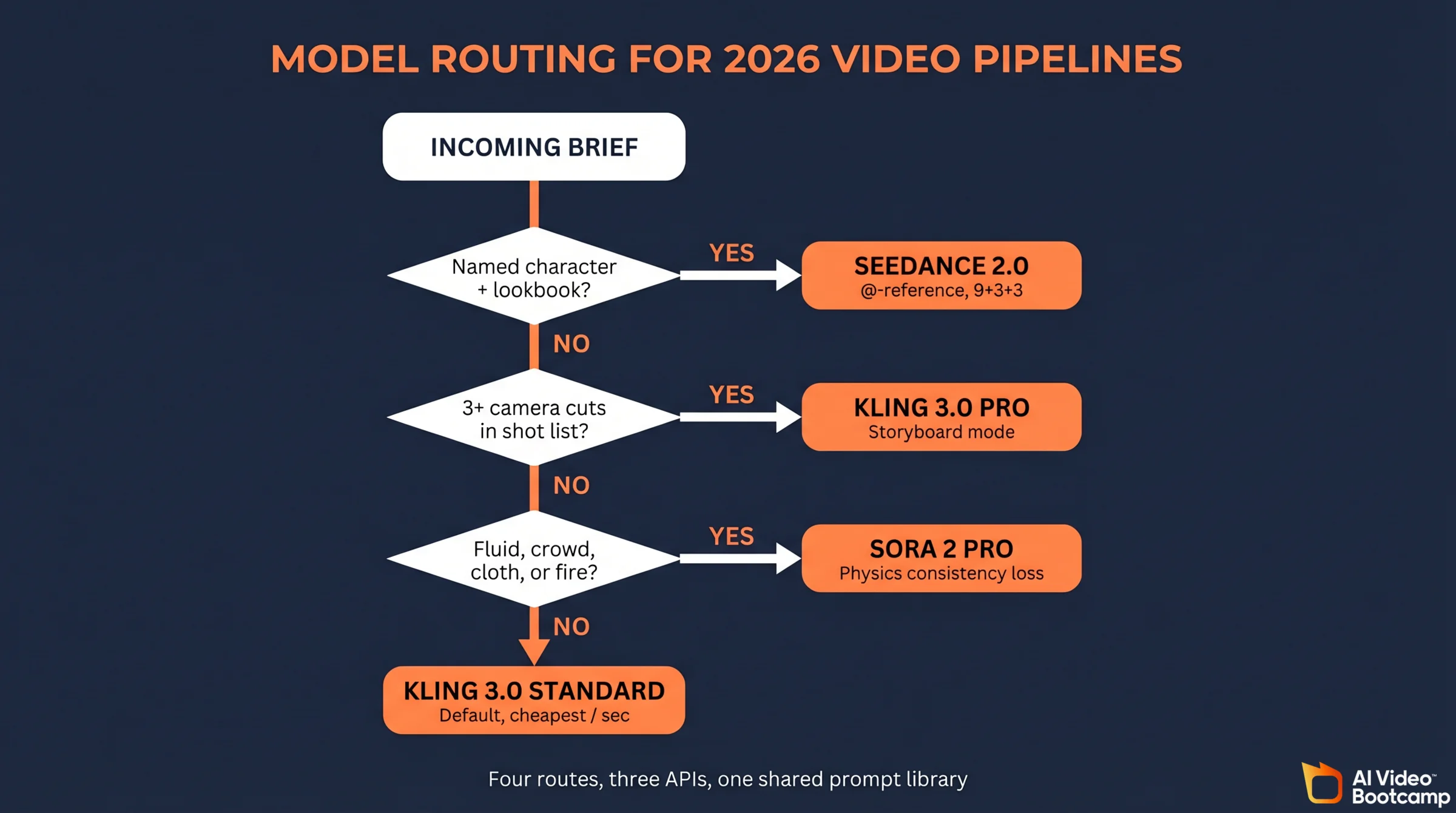

For members building real pipelines, the practical stack is not one model, it is three with clear routing rules.

- Seedance 2.0 as the default for branded character work (ads, UGC-style content, product demos with repeat cast). The Seedance 2.0 deep dive covers the recent legal heat around the model, which is worth reading before you commit budget.

- Kling 3.0 as the scale layer for high-volume social content and multi-cut explainers where cost per second matters more than identity lock.

- Sora 2 as the premium finisher reserved for hero shots, physics-heavy sequences, or narrative clips longer than 15 seconds.

That three-model stack sits alongside the still image layer (Midjourney v7 for mood, Nano Banana Pro for character boards, Flux.2 Pro for photoreal product shots) and the audio layer (ElevenLabs for voice, native model audio for ambient beds). None of the three video models replaces the image layer. For broader ranking context across the full market, see AI video generators ranked for 2026.

One routing factor that becomes a hard requirement from August 2, 2026 onward: provenance signals. Among these three models, Sora 2 ships C2PA Content Credentials by default, while Seedance 2.0 and Kling 3.0 do not — meaning teams targeting EU audiences need to add a separate manifest layer or platform label when routing through Seedance or Kling. The full breakdown is in our AI disclosure compliance guide for 2026.

What This Means for Your Production Workflow

The practitioner takeaway: model routing is a first-class concern in 2026. Teams running a single model through a single endpoint are leaving 40 to 60 percent of their quality-per-dollar on the table compared to teams routing by brief type.

A minimal router looks like this:

- If the brief has a named character and a lookbook, route to Seedance 2.0.

- If the brief has a shot list with 3 or more cuts, route to Kling 3.0 Pro storyboard.

- If the brief mentions fluid, crowd, cloth, or fire, route to Sora 2 Pro.

- Otherwise, default to Kling 3.0 Standard on cost.

Build that as a small decision tree in your prompt-ops layer, keep the three API keys rotating, and budget monthly on a per-model basis. That is what separates the teams shipping 10,000 clips per month from the ones still burning on a single provider.

Frequently Asked Questions

Is Sora 2 available on fal.ai?

No. Sora 2 is currently only available through OpenAI’s direct API. Seedance 2.0 and Kling 3.0 are both available on fal.ai, which is why those two rates in this article come from fal.ai and Sora rates come directly from OpenAI’s developer documentation.

Which API is cheapest per second in April 2026?

Kling 3.0 Standard without audio at $0.084 per second on fal.ai is the cheapest production-grade rate among the three. Sora 2 Standard at $0.10 per second is second. Seedance 2.0 Fast at $0.15 per second (480p with video reference) is third. Seedance 2.0 Standard at $0.3024 per second is the most expensive base tier, but it is also the only one with the full 9 + 3 + 3 multimodal reference system.

Does Kling 3.0 actually generate native 4K?

Kling 3.0’s 4K 60 fps tier is partly a perceptual upscale on top of a roughly 1440p native denoising loop, based on community reports and latency measurements. The output hits a true 4K pixel dimension, but the detail density sits closer to native 1440p than native 4K. Treat the 4K label as marketing-native rather than mathematically native until Kuaishou publishes a technical paper on the upscale pipeline.

Can I pass the same reference images to all three APIs?

Yes, but with different limits and effects. Seedance 2.0 accepts up to 9 images with role tags like @character and @product, and ordering matters. Kling 3.0 accepts up to 3 reference images per shot through its Elements feature, scoped to the storyboard structure. Sora 2 accepts a small number of reference images (typically up to 3) without role tags, so identity drift is more common. For brand-character work with a locked look, Seedance is the only one designed for that workflow.

Why does Sora 2 cost so much more than Seedance or Kling?

Two reasons. First, Sora 2’s physics consistency loss and longer maximum clip duration (up to 25 seconds on Pro Long-Form) require materially more compute per generation. Second, OpenAI prices on a positioning basis for premium cinematic use, not on the high-volume social use case that Kling and Seedance optimize for. For agency workloads burning thousands of clips per month, Sora 2 is rarely the default.

Caveats and What Is Not Yet Verified

- The Kling 3.0 4K 60 fps tier is partly a perceptual upscale on top of a roughly 1440p native denoising loop, based on community reports. Treat the 4K label as marketing-native until Kuaishou publishes a technical blog or arXiv preprint.

- The Sora 2 physics consistency loss is summarized from OpenAI’s system card, not a peer-reviewed paper. The magnitude of its contribution to the observed quality gap is reported, not independently measured.

- The Seedance 2.0

@-referenceordering effect (same images, different order, different output) is a reproducible community observation, not an officially acknowledged behavior. - Pricing for all three models should be re-checked monthly. Unit economics in this market have shifted materially every quarter through 2026.

Sources

- fal.ai model catalogue and pricing (Seedance 2.0 and Kling 3.0 rates, verified 2026-04-20)

- Kling AI official consumer pricing (verified 2026-04-20)

- OpenAI Sora API documentation

- ByteDance Seedance 2.0 pricing coverage, TechNode, March 2026

- Artificial Analysis Video Arena, April 2026 snapshot

- Seedance 2.0 deep dive on AI Video Bootcamp

- Kling AI complete guide on AI Video Bootcamp

- Seedance vs Kling vs Veo 2026 on AI Video Bootcamp

Want the full prompt templates, routing playbooks, and weekly price sweeps we run internally across all three APIs? Join the AI Video Bootcamp community on Skool fo