Pricing verified April 30, 2026.

Suno AI is the most widely used AI music generator in 2026, with 2 million paid subscribers generating 7 million tracks per day across every genre imaginable. For AI video creators, it solves the last major production bottleneck: original, royalty-free music that matches your content perfectly. Whether you need a 30-second background track for a product ad, a full cinematic score for an AI short film, or a complete song with vocals for a music video, Suno generates commercially licensed audio from a text prompt in under 30 seconds. Combined with AI video tools like Kling, Runway, Veo, and Seedance, Suno completes the one-person studio stack that is reshaping content creation in 2026.

What Is Suno AI and Why Should Video Creators Care?

Suno is a browser-based AI music platform that generates full songs - vocals, instruments, mixing, and mastering included - from text descriptions. It is the audio equivalent of what Midjourney did for images and what Runway did for video: it removed the technical barrier entirely.

For AI video creators specifically, Suno matters because sound is what separates a tech demo from watchable content. As we covered in our guide to adding voice and sound to AI videos, silent AI videos get crushed by platform algorithms. Suno gives every creator access to unlimited, custom-tailored music without licensing fees, royalty negotiations, or musical training.

The numbers tell the story. According to TechCrunch, Suno reached $300 million in annual recurring revenue by February 2026, up 404% year-over-year. Nearly 100 million users have signed up for the platform to date, with 10 million actively using it. In November 2025, as TechCrunch reported, the company raised $250 million in Series C funding at a $2.45 billion valuation, led by Menlo Ventures with participation from Nvidia’s NVentures, Lightspeed Venture Partners, and Matrix Partners. That valuation jumped from $500 million to $2.45 billion in just six months.

As 50 Cent told Complex when asked about AI soul renditions of his classic tracks that went viral online: “I really like those songs. The ones that I’ve posted, I really like them. It will reach someone that I missed. Someone who couldn’t hear what I was trying to say to them, in the writing, can hear it now that it’s in that format.” When the journalist pressed whether AI competition is concerning since “they’re machines,” 50 Cent responded: “I don’t think you can beat AI, I think you need to go with it.”

You can watch 50 Cent sharing the AI soul rendition on his Instagram.

That mindset - working with AI rather than against it - is exactly what the most successful creators in this space have adopted.

Suno AI Pricing and Plans: Which Tier Do Video Creators Need?

Pricing last verified April 30, 2026. Consumer subscription plans are from Suno’s official site. Prices change frequently - double-check with Suno before making spending decisions.

Every AI video creator considering Suno needs to understand one critical distinction: free tier users get zero commercial rights. If you plan to monetize any content that uses Suno-generated music, you need a paid plan.

| Plan | Price | Credits / Month | ~Songs / Month | Commercial Rights | Best For |

|---|---|---|---|---|---|

| Free | $0 | 50 / day | ~10 / day | No | Testing and experimentation only |

| Pro | $10 / mo | 2,500 | ~500 | Yes - Full | Solo creators, YouTubers, podcasters |

| Premier | $30 / mo | 10,000 | ~2,000 | Yes - Full | Agencies, high-volume producers, B2B licensing |

At $10/month for ~500 commercially licensed songs, the Pro plan costs roughly $0.02 per track. Compare that to licensing a single stock music track from a traditional library, which typically runs $15-$50 per song. For AI video creators producing content regularly, this is not just cheaper - it is a fundamentally different economic model.

As outlined in Suno’s Terms of Service, upon generation Suno contractually assigns its right, title, and interest in the output directly to the paying user. This legal assignment permits unrestricted distribution to Spotify, Apple Music, Amazon Music, YouTube, and any other platform.

AI Video Bootcamp recommendation: The Pro plan at $10/month is the sweet spot for most video creators. You get 500 songs per month with full commercial rights, which is more than enough for YouTube channels, client work, and social media content. Only upgrade to Premier if you are running a music-focused business or producing content at agency scale.

Suno’s Technical Evolution: From V2 to V5.5

Suno’s progress from 2023 to 2026 represents one of the fastest quality improvements in any generative AI category. Understanding the version history helps creators appreciate what is possible today and set realistic expectations.

| Version | Released | Max Length | Key Breakthroughs |

|---|---|---|---|

| V2 | Fall 2023 | 1 min 20 sec | Basic text-to-audio; heavy artifacts; limited coherence |

| V3 / V3.5 | Spring/Summer 2024 | 4 min (extendable by 2 min) | Improved song structure; maintained chorus melodies; suffered from "reverb fog" |

| V4 / V4.5 | Nov 2024 / May 2025 | 8 min | Drastically improved vocal clarity; Cover and Persona features; smarter style mashups |

| V5 | September 2025 | 8+ min | Studio-quality "dry" vocals; eliminated reverb fog; quantum leap in fidelity |

| V5.5 | March 25, 2026 | 8+ min | Custom voice cloning (Voices); personal model training; My Taste personalization |

The V3.5-to-V5 transition was the critical inflection point. V3.5 tracks suffered from what the community called “reverb fog” - a metallic, muddy acoustic smear that made everything sound like it was recorded at the end of a tunnel. As documented in this complete guide to Suno V5 and Studio, V4 and V5 solved this with deeper neural networks that render bone-dry, intimate transients, producing audio that stands up to critical listening on professional studio monitors. The Suno Studio v1.2 walkthrough on YouTube demonstrates these improvements with direct audio comparisons.

Suno V5.5: The Three Features That Matter (March 2026)

V5.5 launched on March 25, 2026 with three major additions that shift Suno from generic generation toward identity-driven music creation, as Music Business Worldwide reported:

Voices (Custom Voice Cloning): Users can now train the model on their own singing voice. Upload your vocals, complete a quick identity verification (match a spoken phrase to confirm ownership), and Suno builds a private voice profile only you can access. This is limited to Pro and Premier subscribers at 4 credits per generation during the beta. For AI video creators, this means you can create a consistent vocal identity across all your content.

Custom Models: Upload a minimum of six original tracks you own the rights to, and Suno fine-tunes V5.5 on your stylistic patterns. Pro and Premier users can create up to three custom models. This is powerful for creators who have developed a specific sonic brand and want AI to extend it rather than generate from scratch.

My Taste: A recommendation engine that learns from your interactions - tracking preferred genres, moods, and patterns over time. It feeds back into generation, so the more you use Suno, the better it understands your creative preferences. Available on all tiers including free.

Suno Studio: The Browser-Based DAW

The most transformative update for professional producers was the introduction of Suno Studio alongside the V5 architecture. Studio shifted the platform from passive generation to active, surgical manipulation.

Key Studio capabilities that matter for video creators:

Stem Separation (up to 12 pristine stems): Because Suno mathematically generated the track in the first place, its extraction process does not merely EQ the audio - it uses its own latent models to rebuild individual stems from scratch. This produces isolated vocals, drums, bass, guitars, and synths with zero acoustic bleed. For video creators, this means you can pull out just the instrumental, just the vocals, or just the drums to layer under narration or dialogue.

MIDI Extraction: Suno can transcribe every note, chord progression, and rhythmic velocity from a generated track and export it as standard MIDI data. Producers can feed this into hardware synthesizers or professional VST instruments, completely eliminating any remaining AI audio artifacts. This bridges the gap between AI generation and traditional sound design.

Warp Markers: Snap lazy or imprecise AI timing into a quantized professional grid, fixing the rhythmic drift that occasionally appeared in earlier models, as demonstrated in the Suno Studio v1.2 YouTube walkthrough.

Creative Sliders: The “Weirdness” slider (0-100%) gives direct mathematical control over creative risk. Low settings (35-45%) produce conventional, safe pop structures. High settings (70%+) push the model into experimental genre-blending territory.

How to Prompt Suno Like a Professional: The GMIV Framework

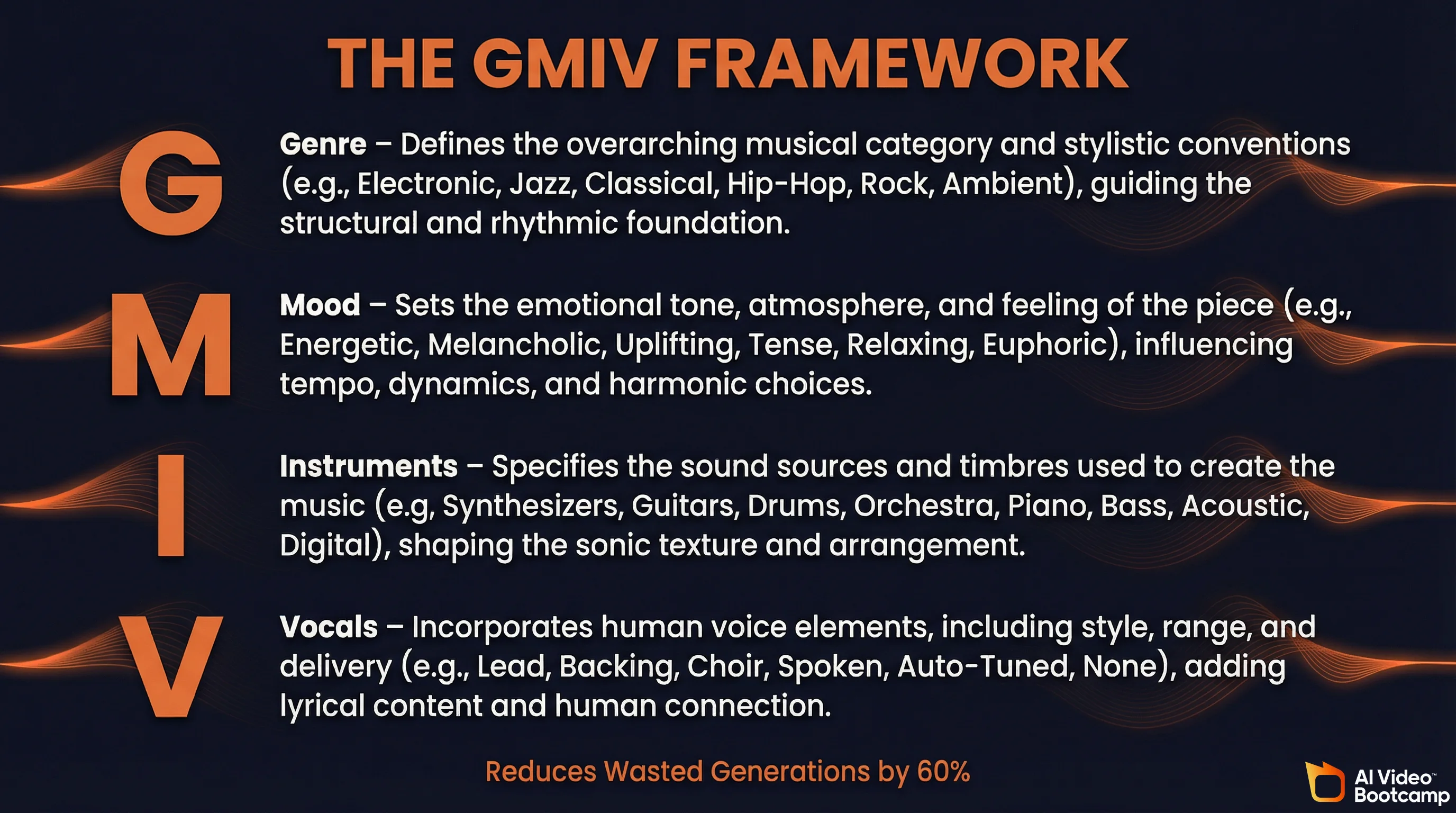

Generating commercially viable music requires understanding how Suno maps text to sound. Random, casual prompts produce random, casual results. Professional AI music producers use the GMIV Framework, a structured prompting methodology that reduces wasted generations by up to 60%, as detailed in this Suno AI prompting masterclass.

GMIV stands for:

G - Genre: Define 1-2 primary genres and sub-genres to anchor the model. Example: “Mainstream Pop, Contemporary Synthpop.” This genre prompting tutorial on YouTube walks through how to dial in nearly any genre.

M - Mood: Establish a single emotional anchor. Multiple or conflicting moods (“happy but melancholic”) dilute the output and cause structural confusion. Pick one: “Euphoric,” “Tense,” “Melancholic,” “Triumphant.”

I - Instruments: Constrain the sonic palette to 2-4 specific instruments. Example: “Analog Moog bass, 808 drums, fingerstyle acoustic guitar.” This prevents the AI from generating a wall of muddy, undifferentiated sound.

V - Vocals: Define the human anchor including gender, register, and delivery style. Example: “Female Soprano, breathy delivery, raspy lead.”

Structural Meta-Tags for Song Architecture

Beyond the GMIV style box, experts use bracketed meta-tags within the lyrics section to dictate song structure and energy flow. The Suno AI Meta Tags guide by Jack Righteous provides a comprehensive reference:

[Intro] - Establishes acoustic palette, keeps movement quiet

[Verse 1][Energy: Low] - Sparse, rhythmic delivery with lower density

[Pre-Chorus] - Shortens phrasing, increases harmonic anticipation

[Chorus][Energy: High][anthemic chorus | stacked harmonies] - Maximum instrumentation, bass drop, layered backing vocals

[Bridge][Energy: Medium] - Shifts harmonic center, provides contrast

[Outro][fade out] - Gradual wind-downThe pipe symbol (|) allows tag stacking for the chorus, triggering the AI to introduce multiple simultaneous effects. According to a popular Reddit thread on r/SunoAI where a user tested 200+ generations, emotional language (“desperate,” “euphoric”) consistently produces better results than cold technical terms (“minor key with reverb”). The phrase “building intensity” outperforms “crescendo,” and “anthemic chorus” is one of the most reliably effective tags.

Prompt Types by Production Phase

| Prompt Type | What to Include | Best Use Case |

|---|---|---|

| Concise | Genre, BPM, 2-3 instruments, vocal tag | Rapid idea sketching, quick background tracks for videos |

| Balanced | Genre, mood, BPM range, instrumentation, structure hint | Early production demos, arrangement testing for projects |

| Detailed | All above + artist references, effects, groove notes, lyrical theme | Near-final mockups, feeding DAW for stem extraction |

For AI video creators: Most background music needs can be handled with concise or balanced prompts. A prompt like “Cinematic orchestral, Hopeful, 90 BPM, strings and piano, no vocals” will generate usable background music in seconds. Save the detailed prompting for hero tracks, intros, or music videos where the audio is the centerpiece.

The AI Video Creator’s Full-Stack Production Pipeline

This is where Suno becomes most valuable for AI Video Bootcamp readers. The combination of AI music generation with AI video tools creates a complete production pipeline that would have required a full team just two years ago. As we explored in The State of AI Video Creation in 2026, the one-person studio is now a reality - and Suno is the audio engine that makes it work.

Step-by-Step: From Text to Finished AI Music Video

Step 1 - Script and Concept: Use Claude or ChatGPT to develop your storyline, scene descriptions, and lyrics. Feed it your creative direction and iterate until the concept is tight.

Step 2 - Music Generation (Suno): Take your lyrics into Suno with a GMIV-formatted style prompt. Generate 3-5 variations and select the strongest. Use Studio to extract stems if you need isolated vocals or instrumentals.

Step 3 - Visual Concept Art: Generate detailed stills with Midjourney, FLUX, or DALL-E/Imagen 4. These become the visual foundation for your scenes - character designs, environments, mood boards.

Step 4 - Video Generation: Animate your stills with Kling 2.1 (best quality-to-cost ratio for character consistency), Runway Gen-4 (most cinematic output), Veo 3 (built-in audio generation), or Seedance 2.0 (strong motion quality).

Step 5 - Lip-Sync (if needed): For music videos with a singing performer, take a screenshot of your AI-generated singer and load it into Hedra. Feed in the Suno track and Hedra syncs the lip movements to the vocal track automatically.

Step 6 - Voiceover Layer (if needed): Add narration with ElevenLabs for documentary-style or explainer content where music serves as background.

Step 7 - Final Assembly: Bring everything into DaVinci Resolve (free) for editing, color grading, and final audio mixing. Export and publish.

Total cost for this entire pipeline: roughly $50-$100/month in tool subscriptions. Total time for a 2-3 minute music video: 2-4 hours. According to MindStudio’s AI filmmaking cost breakdown, a comparable production with traditional tools and freelancers would cost $5,000-$20,000 and take 2-4 weeks.

Automation Pipelines for Scale

For creators who want to produce content at volume, n8n workflow templates can automate much of this pipeline. Pre-built automations exist that chain Suno API (via third-party providers) with GPT-4 for scripting, FLUX for imagery, Runway for video generation, and Creatomate for final assembly. This makes it possible to produce entire music playlist channels for YouTube with minimal manual intervention.

Suno AI vs. Competitors: Which AI Music Generator Should You Use?

The AI music generation space has matured rapidly. Here is how the major platforms compare as of April 2026, specifically through the lens of what matters for video creators. For a deeper dive, see this Suno v5.5 vs Lyria 3 Pro vs Udio comparison by CometAPI and Jam.com’s AI music generator roundup.

| Platform | Best For | Max Length | Vocal Quality | Instrumental Quality | Starting Price |

|---|---|---|---|---|---|

| Suno V5.5 | Complete songs, music videos, creative content | 8+ min | Best in class | Excellent | Free / $10 mo |

| Udio | Professional instrumentals, background music | 8+ min | Very Good | Best in class | Free / $10 mo |

| Google Lyria 3 Pro | Quick generation within Google ecosystem | 3 min | Good | Good | Free (MusicFX) |

| Stability Audio | Enterprise, sound effects, audio inpainting | Varies | Limited | Good | Enterprise pricing |

| ElevenLabs Music | Creators already using ElevenLabs for voice | Varies | Good | Good | Part of ElevenLabs plans |

Bottom line for video creators: Suno is the best all-around choice, especially if your content involves vocals or you want creative flexibility. Udio is the pick when you specifically need pristine instrumental background music for corporate or commercial work. Google Lyria 3 Pro is a free option for quick, casual generation but its 3-minute cap is limiting. Stability Audio is enterprise-focused and not ideal for individual creators.

Monetizing AI Music as a Video Creator

The creator economy around Suno has grown far beyond simple music streaming. For AI video creators who already have production skills, AI music opens multiple revenue streams that compound with existing capabilities. As we detailed in our analysis of people making $10K+/month with AI video, the most successful operators diversify across several income channels.

Revenue Stream 1: Faceless Music YouTube Channels

Faceless music channels represent one of the fastest-growing content categories in 2026. As LiveMusicBlog’s guide to faceless music channels explains, the workflow is straightforward: generate music with Suno, create audio-reactive visuals or AI-generated video backgrounds, and upload to YouTube. Because Suno-generated music is not registered in any Content ID database, these tracks will not trigger copyright claims. Channels in this niche can scale quickly because each video requires minimal manual effort.

Revenue Stream 2: AI Music Video Production

Full AI music videos - combining Suno tracks with AI-generated visuals from Kling, Runway, or Veo - are a premium content format. Creators who develop a distinctive visual and musical style can build dedicated fan bases entirely within the generative ecosystem. Suno’s “Hooks” feature (a TikTok-style vertical discovery feed built into the platform) helps distribute 10-60 second highlight reels that drive listeners to full tracks.

Revenue Stream 3: B2B Music Licensing

Skilled Suno operators are functioning as “AI Music Supervisors,” charging flat fees to generate, refine, and license custom audio for commercial clients. The typical client profile: content creators, podcasters, local businesses needing TV ad music, and digital marketing agencies who need mood-tailored background music but lack the budget for human composers or the technical knowledge to use AI tools themselves. According to this r/passive_income thread on Reddit, independent operators report earning $3,000-$5,000 per month through this service model.

Revenue Stream 4: Prompt Engineering and Education

The specialized knowledge required to generate professional-quality music with Suno has itself become a highly monetizable asset. Creators are selling prompt libraries, meta-tag blueprints, workflow guides, and running paid Discord communities. YouTube channels dedicated to AI music production are monetized through ad revenue, affiliate marketing for VST plugins, and premium Patreon memberships.

Revenue Stream 5: Streaming Distribution

While fully AI-generated tracks face “Distribution Shadowbans” on platforms like Spotify (excluded from algorithmic discovery feeds like Discover Weekly and Release Radar), hybrid tracks that incorporate a human-performed layer can bypass these filters. As Soundverse’s analysis of AI music ownership explains, platforms now categorize uploads as human-created, AI-assisted, or fully AI-generated - each with different distribution treatment. The workflow involves extracting stems from Suno, replacing at least one element with a human-performed track using professional VST instruments, and re-rendering the final master. This reclassifies the work from “purely synthetic” to “AI-assisted,” unlocking organic algorithmic reach and establishing a stronger legal claim to copyright.

The Legal Landscape: What Creators Need to Know in 2026

The legal framework around AI-generated music underwent a historic transformation in late 2025 that every creator should understand.

The RIAA Lawsuits and Resolution

On June 24, 2024, the Recording Industry Association of America (RIAA) filed landmark federal lawsuits against both Suno and Udio on behalf of Universal Music Group, Sony Music Entertainment, and Warner Music Group. The labels accused the AI companies of industrial-scale, unlicensed copying of protected sound recordings and sought damages of up to $150,000 per infringed work.

In its legal defense, as tracked by McKool Smith’s AI litigation updates, Suno argued Fair Use - that its AI does not copy or store existing recordings but rather analyzes acoustic patterns, harmonic structures, and genre conventions to learn the mathematical rules of music, analogous to a human musician learning by listening.

The story shifted dramatically in late 2025. In October, Universal Music Group and Udio announced a historic settlement that ended their litigation. This was not just a financial payout - it was a strategic agreement to build a new commercial AI music platform trained exclusively on authorized, licensed music. In November, as CompleteMusicUpdate reported, Suno and Warner Music Group reached a similar settlement.

The 2026 Compliance Framework

These settlements established a three-tiered compliance architecture that now defines the industry standard, as outlined by Soundverse AI’s ownership and rights analysis:

1. Dataset Transparency: AI developers must disclose their data sources and clear rights before training models, ending the era of unregulated web scraping.

2. Attribution Tracking: AI-generated tracks must contain embedded digital metadata linking the output back to the specific datasets, artists, and models used during generation.

3. Automated Licensing: Integrated digital ledgers handle real-time royalty tracking and automated financial payouts to rights holders whose data contributed to the generative model.

What this means for creators: The legal uncertainty that once hung over AI music has largely resolved. The industry has moved from “sue and destroy” to “license and partner.” Using Suno on a paid plan with proper metadata attribution is now on firm legal ground. The key best practices remain: do not register AI-generated music with Performing Rights Organizations (ASCAP, BMI, GEMA), list “Generated with Suno” in your track metadata, and rely on mechanical streaming royalties rather than attempting to claim performance royalties.

Suno API: Building Automated Music Pipelines

For developers and technically inclined creators who want to integrate AI music generation into automated workflows, the Suno API ecosystem is evolving rapidly.

Suno (the company) still prioritizes its web-based consumer platform and has not released a widely available public API. Beta access is available to select partners only. However, as AIML API’s comprehensive Suno API review details, third-party API providers like sunoapi.org, AIML API, and Evolink have filled this gap, offering clean REST APIs that handle account management, concurrency, and session complexity behind the scenes.

A typical API integration follows this pattern: authenticate with a bearer token, send a POST request with your payload (lyrics, tags, style, model selection like suno-v5 or v5-turbo), receive a task ID, and poll a status endpoint or handle a webhook for the completed audio URL. Full generation takes roughly 20-30 seconds. Output is watermark-free and commercially usable.

At scale, the cost through third-party providers can actually be lower than Suno’s consumer pricing. The raw cost at Premier tier works out to approximately $0.03-$0.04 per song, and bulk API pricing can undercut that for high-volume operations.

Pre-built n8n workflow templates already exist that chain Suno API generation with GPT-4 for scripting, Runway for video generation, and Creatomate for final video assembly - enabling fully automated content pipelines from text prompt to published video.

Suno AI by the Numbers: Key Statistics for 2026

| Metric | Figure | Source |

|---|---|---|

| Total signups | ~100 million | TechCrunch, Feb 2026 |

| Paid subscribers | 2 million | TechCrunch, Feb 2026 |

| Annual Recurring Revenue | $300 million | TechCrunch, Feb 2026 |

| YoY revenue growth | 404% | Sacra, 2026 |

| Valuation (Series C) | $2.45 billion | TechCrunch, Nov 2025 |

| Series C funding raised | $250 million | TechCrunch, Nov 2025 |

| Daily track generation | 7 million songs/day | Billboard / Suno CEO interview |

| Daily music streamed | 20 million minutes/day | Fueler, 2026 |

| Cost per song (Premier) | ~$0.02-$0.04 | Calculated from pricing tiers |

| Film/TV music supervisors using AI | 40% | Soundverse, 2026 |

| Ad recall improvement with personalized AI audio | 60% higher | Soundverse, 2026 |

For more AI media statistics, see our comprehensive 60+ Generative AI Statistics for 2026 page with data across image, video, and audio generation.

Frequently Asked Questions

Can I use Suno AI music in my YouTube videos commercially?

Yes, but only on paid plans. Suno Pro ($10/month) and Premier ($30/month) subscribers receive full commercial rights to all generated music. Free tier users get zero commercial rights. Suno-generated music is not registered in any Content ID database, so it will not trigger copyright claims on YouTube. You own the output and can monetize it freely, as confirmed in Suno’s Terms of Service.

How does Suno AI compare to Udio for AI video creators?

Suno excels at complete songs with vocals, creative genre-blending, and fast iteration, making it ideal for music videos, faceless channels, and branded content. Udio (built by ex-Google DeepMind engineers) prioritizes instrumental fidelity and is rated as “almost indistinguishable from real recordings” for background music. For AI video creators who need background instrumentals, both work well. For full songs with vocals, Suno is the stronger choice. See this detailed comparison by CometAPI for audio samples.

Is AI-generated music from Suno legal to distribute on Spotify and Apple Music?

Yes, with important caveats. Paid Suno subscribers can distribute through aggregators like DistroKid. However, industry experts advise against registering AI music with Performing Rights Organizations (ASCAP, BMI) since AI cannot legally hold copyright as an author. Best practice is to list “Generated with Suno” in metadata and rely on mechanical streaming royalties only. The late-2025 licensing settlements between Suno/UMG and Warner Music Group have significantly clarified the legal landscape.

What is the best Suno prompting technique for professional-quality music?

Professional producers use the GMIV Framework: Genre (1-2 primary genres), Mood (single emotional anchor like “Euphoric” or “Melancholic”), Instruments (2-4 specific instruments like “Analog Moog bass, 808 drums”), and Vocals (gender, register, delivery style). This structured approach reduces wasted generations by up to 60%. Combine GMIV with structural meta-tags like [Intro], [Verse 1][Energy: Low], and [Chorus][Energy: High] in the lyrics section for precise control over song architecture. The Jack Righteous meta-tags guide is the best reference for this.

Can I clone my own voice with Suno AI?

Yes, as of Suno V5.5 (March 2026). The new Voices feature lets Pro and Premier subscribers train the model on their own singing voice. You must complete a verification step by matching a spoken phrase to confirm voice ownership. Your voice profile is private and only accessi